Imagine it’s the year 2040, and a 12-year-old kid with diabetes pops a piece of chewing gum into his mouth. A temporary tattoo on his forearm registers the uptick in sugar in his blood stream and sends that information to his phone. Data from this health-monitoring tattoo is also uploaded to the cloud so his mom can keep tabs on him. She has her own temporary tattoos—one for measuring the lactic acid in her sweat as she exercises and another for continuously tracking her blood pressure and heart rate.

Right now, such tattoos don’t exist, but the key technology is being worked on in labs around the world, including

my lab at the University of Massachusetts Amherst. The upside is considerable: Electronic tattoos could help people track complex medical conditions, including cardiovascular, metabolic, immune system, and neurodegenerative diseases. Almost half of U.S. adults may be in the early stages of one or more of these disorders right now, although they don’t yet know it.

Technologies that allow early-stage screening and health tracking long before serious problems show up will lead to better outcomes. We’ll be able to look at factors involved in disease, such as diet, physical activity, environmental exposure, and psychological circumstances. And we’ll be able to conduct long-term studies that track the vital signs of apparently healthy individuals as well as the parameters of their environments. That data could be transformative, leading to better treatments and preventative care. But monitoring individuals over not just weeks or months but years can be achieved only with an engineering breakthrough: affordable sensors that ordinary people will use routinely as they go about their lives.

Building this technology is what’s motivating the work at my

2D bioelectronics lab, where we study atomically thin materials such as graphene. I believe these materials’ properties make them uniquely suited for advanced and unobtrusive biological monitors. My team is developing graphene electronic tattoos that anyone can place on their skin for chemical or physiological biosensing.

The Rise of Epidermal Electronics

The idea of a peel-and-stick sensor comes from the groundbreaking work of

John Rogers and his team at Northwestern University. Their “epidermal electronics” embed state-of-the-art silicon chips, sensors, light-emitting diodes, antennas, and transducers into thin epidermal patches, which are designed to monitor a variety of health factors. One of Rogers’s best-known inventions is a set of wireless stick-on sensors for newborns in the intensive care unit that make it easier for nurses to care for the fragile babies—and for parents to cuddle them. Rogers’s wearables are typically less than a millimeter thick, which is thin enough for many medical applications. But to make a patch that people would be willing to wear all the time for years, we’ll need something much less obtrusive.

In search of thinner wearable sensors,

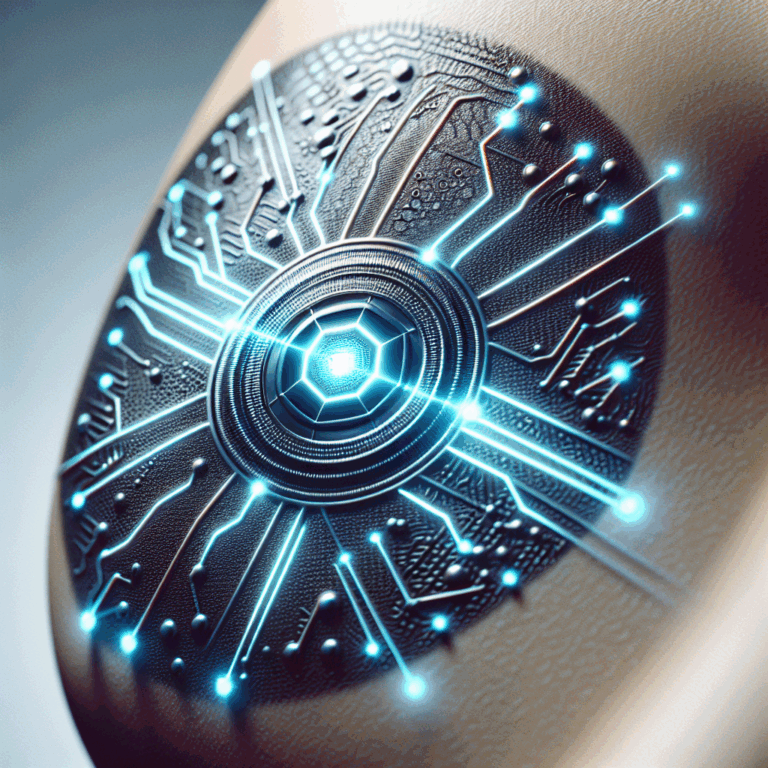

Deji Akinwande and Nanshu Lu, professors at the University of Texas at Austin, created graphene electronic tattoos (GETs) in 2017. Their first GETs, about 500 nanometers thick, were applied just like the playful temporary tattoos that kids wear: The user simply wets a piece of paper to transfer the graphene, supported by a polymer, onto the skin.

Graphene is a wondrous material composed of a single layer of carbon atoms. It’s exceptionally conductive, transparent, lightweight, strong, and flexible. When used within an electronic tattoo, it’s imperceptible: The user can’t even feel its presence on the skin. Tattoos using 1-atom-thick graphene (combined with layers of other materials) are roughly one-hundredth the thickness of a human hair. They’re soft and pliable, and conform perfectly to the human anatomy, following every groove and ridge.

The ultrathin graphene tattoos are soft and pliable, conforming to the skin’s grooves and ridges. Dmitry Kireev/The University of Texas at Austin

Some people mistakenly think that graphene isn’t biocompatible and can’t be used in bioelectronic applications. More than a decade ago, during the early stages of graphene development, some

preliminary reports found that graphene flakes are toxic to live cells, mainly because of their size and the chemical doping used in the fabrication of certain types of graphene. Since then, however, the research community has realized that there are at least a dozen functionally different forms of graphene, many of which are not toxic, including oxidized sheets, graphene grown via chemical vapor deposition, and laser-induced graphene. For example, a 2024 paper in Nature Nanotechnology reported no toxicity or adverse effects when graphene oxide nanosheets were inhaled.

We know that the 1-atom-thick sheets of graphene being used to make e-tattoos are completely biocompatible. This type of graphene has already been used for

neural implants without any sign of toxicity, and can even encourage the proliferation of nerve cells. We’ve tested graphene-based tattoos on dozens of subjects, who have experienced no side effects, not even minor skin irritation.

When Akinwande and Lu created the first GETs in 2017, I had just finished my Ph.D. in

bioelectronics at the German research institute Forschungszentrum Jülich. I joined Akinwande’s lab, and more recently have continued the work at my own lab in Amherst. My collaborators and I have made substantial progress in improving the GETs’ performance; in 2022 we published a report on version 2.0, and we’ve continued to push the technology forward.

Electronic Tattoos for Heart Disease

According to the World Health Organization, cardiovascular diseases are the

leading cause of death worldwide, with causal factors including diet, lifestyle, and environmental pollution. The long-term tracking of people’s cardiac activity—specifically their heart rate and blood pressure—would be a straightforward way to keep tabs on people who show signs of trouble. Our e-tattoos would be ideal for this purpose.

Measuring heart rate is the easier task, as the cardiac tissue produces obvious electrical signals when the muscles depolarize and repolarize to produce each heartbeat. To detect such

electrocardiogram signals, we place a pair of GETs on a person’s skin, either on the chest near the heart or on the two arms. A third tattoo is placed elsewhere and used as a reference point. In what’s known as a differential amplification process, an amplifier takes in signals from all three electrodes but ignores signals that appear in both the reference and the measuring electrodes, and only amplifies the signal that represents the difference between the two measuring electrodes. This way, we isolate the relevant cardiac electrical activity from the surrounding electrophysiological noise of the human body. We’ve been using off-the-shelf amplifiers from companies like OpenBCI that are packaged into wireless devices.

Continuously measuring blood pressure via tattoo is much more difficult. We started that work with Akinwande of UT Austin in collaboration with Roozbeh Jafari of Texas A&M University (now at MIT’s Lincoln Laboratory). Surprisingly, the blood pressure monitors that doctors use today aren’t significantly different from the ones that doctors were using 100 years ago. You almost certainly have encountered such a device yourself. The machine uses a cuff, usually placed around the upper arm, that inflates to apply pressure on an artery until it briefly stops the flow of blood, then the cuff slowly deflates. While deflating, the machine records the beats as the heart pushes blood through the artery and measures the highest (systolic) and lowest (diastolic) pressure. While the cuff works well in a doctor’s office, it can’t provide a continuous reading or take measurements when a person is on the move. In hospital settings, nurses wake up patients at night to take blood pressure readings, and at-home devices require users to be proactive about monitoring their levels.

Graphene electronic tattoos (GETs) can be used for continuous blood pressure monitoring. Two GETs placed on the skin act as injecting electrodes [red] and send a tiny current through the arm. Because blood conducts electricity better than tissue, the current moves through the underlying artery. Four GETs acting as sensing electrodes [blue] measure the bioimpedance—the body’s resistance to electric current—which changes according to the volume of blood moving through the artery with every heartbeat. We’ve trained a machine learning model to understand the correlation between bioimpedance readings and blood pressure.Chris Philpot

We developed a new system that uses only stick-on GETs to

measure blood pressure continuously and unobtrusively. As we described in a 2022 paper, the GET doesn’t measure pressure directly. Instead, it measures electrical bioimpedance—the body’s resistance to an electric current. We use several GETs to inject a small-amplitude current (50 microamperes at present), which goes through the skin to the underlying artery; GETs on the other side of the artery then measure the impedance of the tissue. The rich ionic solution of the blood within the artery acts as a better conductor than the surrounding fat and muscle, so the artery is the lowest-resistance path for the injected current. As blood flows through the artery, its volume changes slightly with each heartbeat. These changes in blood volume alter the impedance levels, which we then correlate to blood pressure.

While there is a clear correlation between bioimpedance and blood pressure, it’s not a linear relationship—so this is where machine learning comes in. To train a model to understand the correlation, we ran a set of experiments while carefully monitoring our subjects’ bioimpedance with GETs and their blood pressure with a finger-cuff device. We recorded data as the subjects performed hand grip exercises, dipped their hands into ice-cold water, and did other tasks that altered their blood pressure.

Our graphene tattoos were indispensable for these model-training experiments. Bioimpedance can be recorded with any kind of electrode—a wristband with an array of aluminum electrodes could do the job. However, the correlation between the measured bioimpedance and blood pressure is so precise and delicate that moving the electrodes by just a few millimeters (like slightly shifting a wristband) would render the data useless. Our graphene tattoos kept the electrodes at exactly the same location during the entire recording.

Once we had the trained model, we used GETs to again record those same subjects’ bioimpedance data and then derive from that data their systolic, diastolic, and mean blood pressure. We tested our system by continuously measuring their blood pressure for more than 5 hours, a tenfold longer period than in previous studies. The measurements were very encouraging. The tattoos produced more accurate readings than blood-pressure-monitoring wristbands did, and their performance met the criteria for the highest accuracy ranking under the

IEEE standard for wearable cuffless blood-pressure monitors.

While we’re pleased with our progress, there’s still more to do. Each person’s biometric patterns are unique—the relationship between a person’s bioimpedance and blood pressure is uniquely their own. So at present we must calibrate the system anew for each subject. We need to develop better mathematical analyses that would enable a machine learning model to describe the general relationship between these signals.

Tracking Other Cardiac Biomarkers

With the support of the

American Heart Association, my lab is now working on another promising GET application: measuring arterial stiffness and plaque accumulation within arteries, which are both risk factors for cardiovascular disease. Today, doctors typically check for arterial stiffness and plaque using diagnostic tools such as ultrasound and MRI, which require patients to visit a medical facility, utilize expensive equipment, and rely on highly trained professionals to perform the procedures and interpret the results.

Graphene tattoos can be used to continuously measure a person’s bioimpedance, or the body’s resistance to an electric current, which is correlated to the person’s blood pressure.

Dmitry Kireev/The University of Texas at Austin and Kaan Sel/Texas A&M University

With GETs, doctors could easily and quickly take measurements at multiple locations on the body, getting both local and global perspectives. Since we can stick the tattoos anywhere, we can get measurements from major arteries that are otherwise difficult to reach with today’s tools, such as the carotid artery in the neck. The GETs also provide an extremely fast readout of electrical measurements. And we believe we can use machine learning to correlate bioimpedance measurements with both arterial stiffness and plaque—it’s just a matter of conducting the tailored set of experiments and gathering the necessary data.

Using GETs for these measurements would allow researchers to look deeper into how stiffening arteries and the buildup of plaque are related to the development of high blood pressure. Tracking this information for a long time in a large population would help clinicians understand the problems that eventually lead to major heart diseases—and perhaps help them find ways to prevent those diseases.

What Can You Learn from Sweat?

In a different area of work, my lab has just begun developing graphene tattoos for

sweat biosensing. When people sweat, the liquid carries salts and other compounds onto the skin, and sensors can detect markers of good health or disease. We’re initially focusing on cortisol, a hormone associated with stress, stroke, and several disorders of the endocrine system. Down the line, we hope to use our tattoos to sense other compounds in sweat, such as glucose, lactate, estrogen, and inflammation markers.

Several labs have already introduced passive or active electronic patches for sweat biosensing. The passive systems use a chemical indicator that

changes color when it reacts with specific components in sweat. The active electrochemical devices, which typically use three electrodes, can detect substances across a wide range of concentrations and yield accurate data, but they require bulky electronics, batteries, and signal processing units. And both types of patches use cumbersome microfluidic chambers for sweat collection.

In our GETs for sweat, we use the graphene as a transistor. We modify the graphene’s surface by adding certain molecules, such as antibodies, that are designed to bind to specific targets. When a target substance interacts with the antibody, it produces a measurable electrical signal that then changes the resistance of the graphene transistor. That resistance change is converted into a readout that indicates the presence and concentration of the target molecule.

We’ve already successfully developed standalone graphene biosensors that can detect food toxins, measure ferritin (a protein that stores iron), and distinguish between the

COVID-19 and flu viruses. Those standalone sensors look like chips, and we place them on a tabletop and drip liquid onto them for the experiments. With support from the U.S. National Science Foundation, we’re now integrating this transistor-based sensing approach into GET wearable biosensors that can be stuck on the skin for direct contact with the sweat.

We’ve also improved our GETs by adding microholes to allow for water transport, so that sweat doesn’t accumulate under the GET and interfere with its function. Now we’re working to ensure that enough sweat is coming from the sweat ducts and into the tattoo, so that the target substances can react with the graphene.

The Way Forward for Graphene Tattoos

To turn our technology into user-friendly products, there are

a few engineering challenges. Most importantly, we need to figure out how to integrate these smart e-tattoos into an existing electronic network. At the moment, we have to connect our GETs to standard electronic circuits to deliver the current, record the signal, and transmit and process the information. That means the person wearing the tattoo must be wired to a tiny computing chip that then wirelessly transmits the data. Over the next five to ten years, we hope to integrate the e-tattoos with smartwatches. This integration will require a hybrid interconnect to join the flexible graphene tattoo to the smartwatch’s rigid electronics.

In the long term, I envision 2D graphene materials being used for fully integrated electronic circuits, power sources, and communication modules. Microelectronic giants such as

Imec and Intel are already pursuing electronic circuits and nodes made from 2D materials instead of silicon.

Perhaps in 20 years, we’ll have 2D electronic circuits that can be integrated with soft human tissue. Imagine electronics embedded in the skin that continuously monitor health-related biomarkers and provide real-time feedback through subtle, user-friendly displays. This advancement would offer everyone a convenient and noninvasive way to stay informed and proactively manage their own health, beginning a new era of human self-knowledge.

This article appears in the March 2025 print issue as “A Graphene Biosensor Tattoo.”